Confusion Matrix and it's 25 offspring: or the link between machine learning and epidemiology | Dr. Yury Zablotski

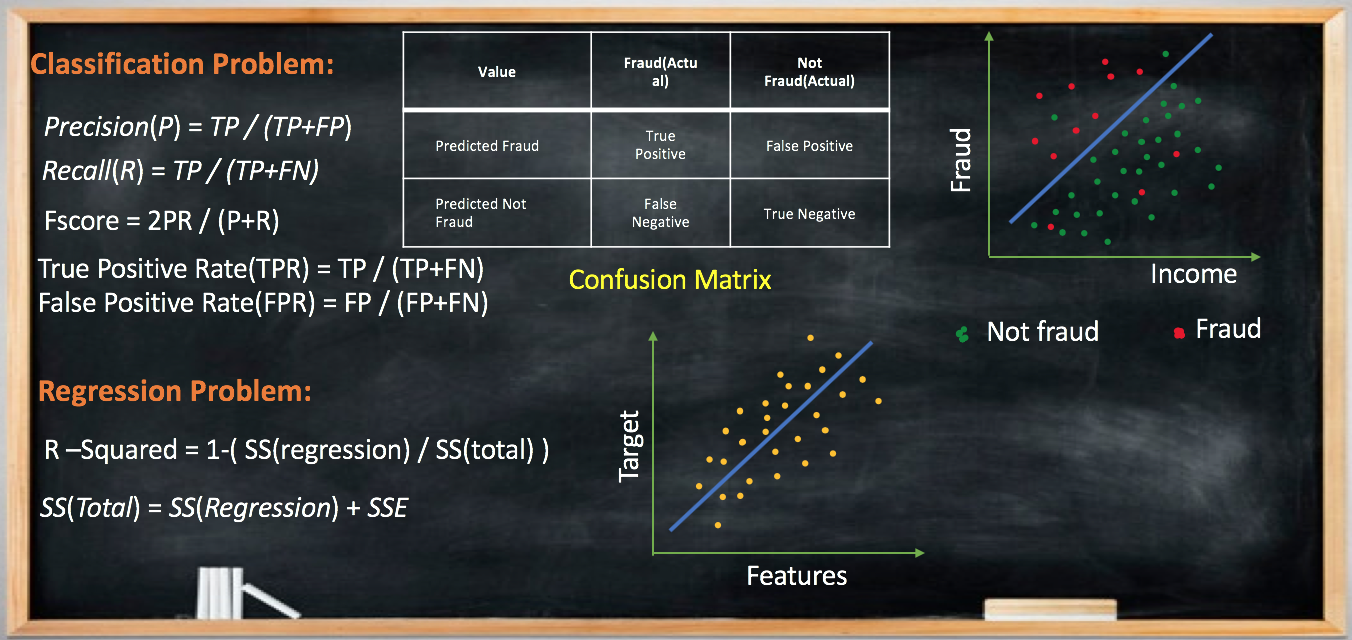

Importance of Mathews Correlation Coefficient & Cohen's Kappa for Imbalanced Classes | by Sarit Maitra | Medium

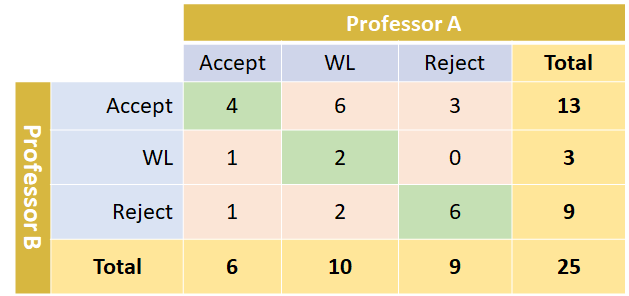

Cohen's Kappa: What it is, when to use it, and how to avoid its pitfalls | by Rosaria Silipo | Towards Data Science

Multi-Class Metrics Made Simple, Part III: the Kappa Score (aka Cohen's Kappa Coefficient) | by Boaz Shmueli | Towards Data Science

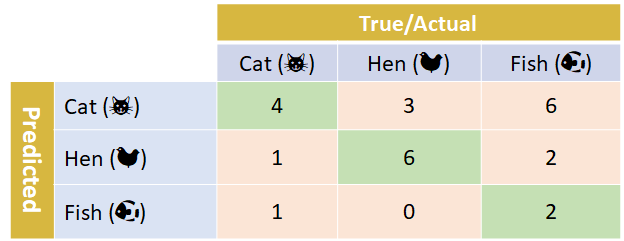

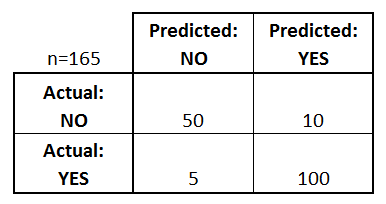

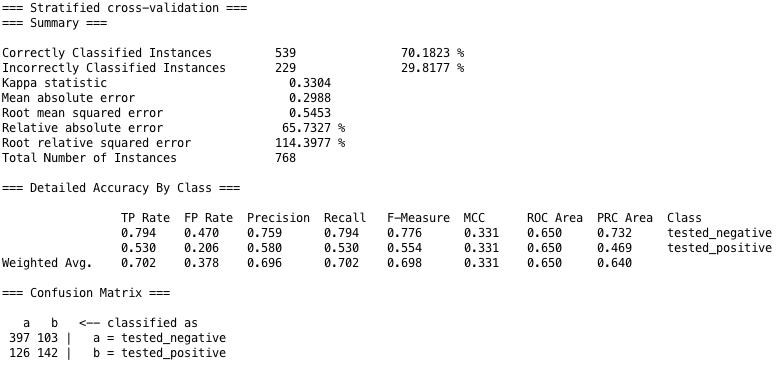

Metrics to evaluate classification models with R codes: Confusion Matrix, Sensitivity, Specificity, Cohen's Kappa Value, Mcnemar's Test - Data Science Vidhya

Rizal Fathony Twitterren: "6/ Our framework supports a wide variety of non-decomposable performance metrics that can be expressed as a sum of fractions over the entities in the confusion matrix. This includes

Evaluation Metrics in Machine Learning Models using Python | by Manoj Singh | Analytics Vidhya | Medium